What we learned about at the LSC-ITC conference of civil justice professionals, about the state of AI & A2J

Last week, our team at the Legal Design Lab presented at the Legal Services Corporation Innovations in Technology Conference on several topics: how to build better legal help websites, how to improve e-filing and forms in state court systems, and how to use texting to assess brief services for housing matters.

One of the most popular, large sessions we co-ran was on Generative AI and Access to Justice. In this panel to several hundred participants, Margaret Hagan, Quentin Steenhuis at Suffolk LIT Lab, and Hannes Westermann from CyberJustice Lab in Montreal presented on opportunities, user research, demonstrations, and risks and safety of AI and access to justice.

We started the session by doing a poll of the several hundred participants. We asked them a few questions

- are you optimistic, pessimistic, or in between on the future of AI and access to justice?

- what are you working on in this area, do you have projects to share?

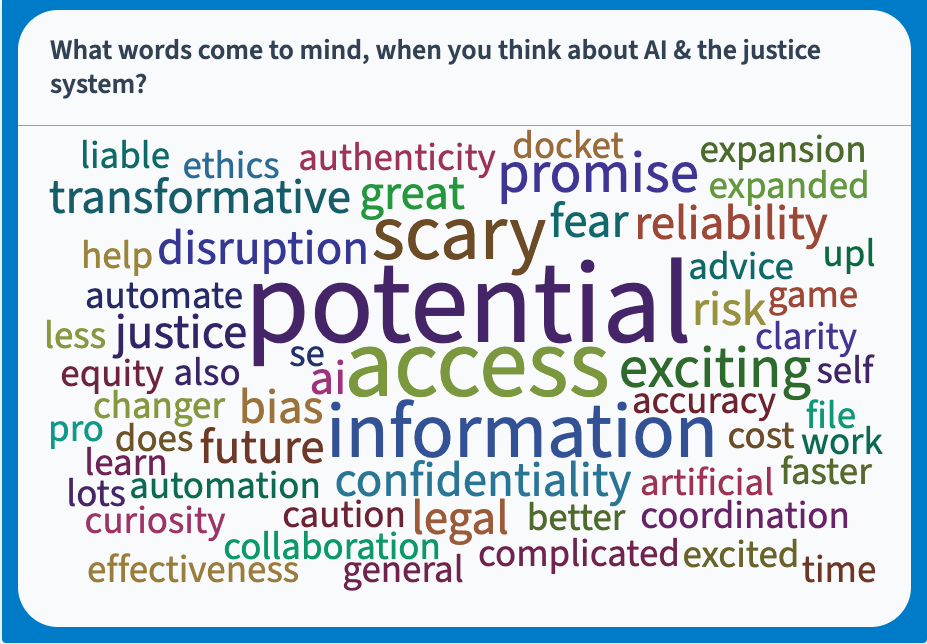

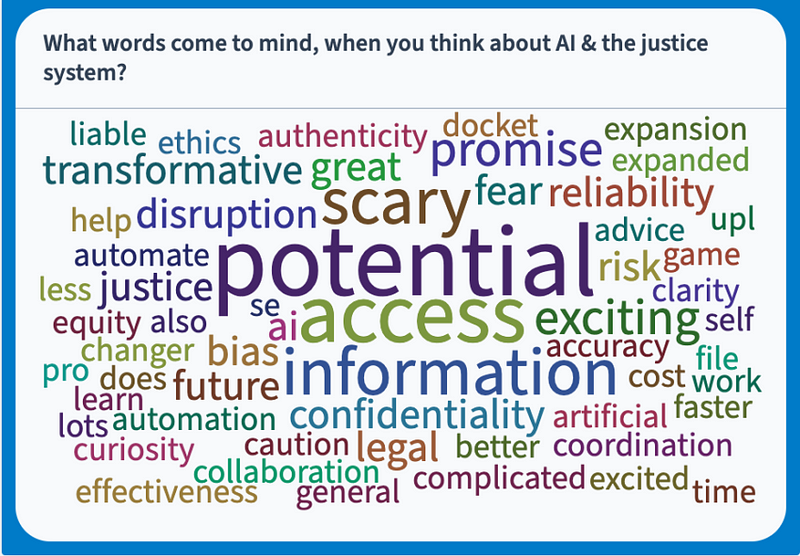

- What words come to mind when you think about AI and access to justice?

Margaret presented on opportunities for AI & A2J, user research the Lab is doing on how people use AI for legal problem-solving, and what justice experts have said are the metrics to look at when evaluating AI. Quentin explained Generative AI & demonstrated Suffolk LIT Lab’s document automation AI work. Hannes presented on several AI-A2J projects he has worked on, including JusticeBot for housing law in Quebec, Canada, and LLMediator which he has worked on with Jaromir Savelka and Karim Benyekhlef to improve dispute resolution among people in a conflict.

We then went through a hands-on group exercise to spot AI opportunities in a particular person’s legal problem-solving journey & talk through risks, guardrails, and policies to improve the safety of AI.

Here is some of what we learned at the presentation and some thoughts about moving forward on AI & Access to Justice.

Cautious Optimism about AI & Justice

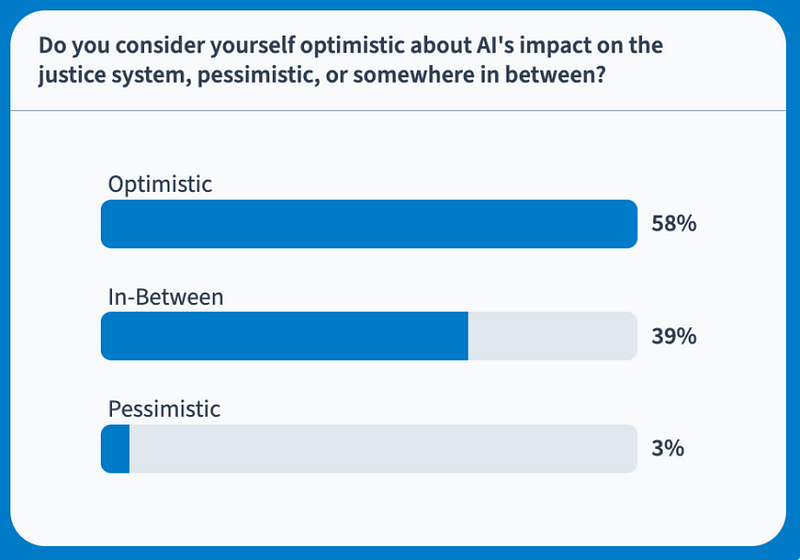

Many justice professionals, especially the folks who had come to this conference and joined the AI session, are optimistic about Artificial Intelligence’s future impact on the justice system — or are waiting to see. A much smaller percentage is pessimistic about how AI will play out for access to justice.

We had 38 respondents to our poll before our presentation started, and here’s the breakdown of optimism-pessimism.

In our follow-up conversations, we heard regularly that people were excited about the possibility of AI to provide services at scale, affordably to more people (aka, ‘closing the justice gap’) — but that the regulation and controls need to be in place to prevent harm.

Looking at the word cloud of people’s responses to the question “What words come to mind when you think about AI & the justice system?” further demonstrates this cautious optimism (with a focus on smart risk mitigation to empower good, impactful innovation).

This cautious optimism is in contrast to a totally cold, prohibitive approach to AI. If we saw more pessimism, we might have heard more people expressing that there should be no use of AI in the justice system. But at least among the group who attended this conference, we saw little of that perspective (that AI should be avoided or shut down in legal services, for fear of its possibility to harm). Rather, people seemed open to exploring, testing, and collaborating on AI & Access to Justice projects, as long as there was a strong focus on protecting against bias, mistakes, and other risks that could lead to harm of the public.

We need to talk about risks more specifically

That said, despite the pervasive concern about risk and harm, there’s no clear framework on how to protect people from them as of yet.

This could be symptomatic of the broader way that legal services have been regulated in the past. That instead of talking about specific risks, we speak in generalizations about ‘protecting the consumer’. We don’t have a clear typology of what mistakes can happen, what harms can occur, how important/severe these are, and how to protect against them.

Because of this lack of a clear risk framework, most discussions about how to spot risks and harms of AI are general, anecdotal, and high-level. Even if everyone agrees that we need safety rules, including technical and policy-based interventions, we don’t have a clear menu of what those can be.

This is likely to be a large, multi-stakeholder, multi-phased process — but we need more consensus on a working framework of what risks and mistakes to watch out for, how much to prioritize them, and what kinds of things can protect people from them. Hopefully, there will be more government agencies and cross-state consortiums working on these actionable policy frameworks that can encourage responsible innovation.

Demos & User Journeys get to good AI brainstorms

Talking about AI (or brainstorming about it) can be intimidating for non-technical folks in the justice system. They may feel that it’s unclear or difficult to know where to begin when thinking about how AI could help them deliver services better, how clients could benefit, or how it could play a good role in delivering justice.

Demonstration projects, like those shared by Quinten and Hannes, are beneficial to legal aid, court staff, and other legal professionals. These concrete, specific demos allow people to see exactly how AI solutions could play out — and then riff on these demo’s, to think through variations of how AI could help on client tasks, service provider tasks, executive directors’, funders’, or otherwise.

Demo projects don’t have to be live, in-the-field AI efforts. Rather, showing early-stage versions of AI or even more provocative ‘pie-in-the-sky’ AI applications can help spark more creativity in the justice professional community, get into more specific conversations about risks and harms, and help drive momentum to make good responsible innovation happen.

Aside from demo’s of AI projects, user journey exercises can also be a great way to spark a productive brainstorm of opportunities, risks, and policies.

In the second half of the presentation, we ran an interactive workshop. We shared a user story of someone going through a problem with their landlord, in which their Milwaukee apartment had the heat off and it wasn’t getting fixed.

We walked through a status quo user journey, and which they tried to seek legal help, got delayed, made some connections, and got connected with someone to do a demand letter.

We asked all of the participants to work in small groups, to identify where in the status quo user journey, AI could be of help. They brainstormed lots of ideas for the particular touchpoints and stakeholders: for the user, friends and family, community organization, legal aid, and pro bono groups. We then asked them to spot safety risks and possible harms, and finally to propose ways to mitigate these risks.

This kind of specific user journey and case-type exercise can help people more clearly see how they could apply the general things they’re learning about AI, to specific legal services. It inspires more creativity and gets more common collaboration to happen about where the priority should be.

Need for a Common AI-A2J Agenda of Tasks

During our exercise and follow-up conversations, we saw a laundry list emerge of possible ways AI could help different stakeholders in the justice system. This flare-out of ideas is exciting but also overwhelming.

Which ideas are worth pursuing, funding, and piloting first?

We need a working agenda of AI and Access to Justice tasks. Participants discussed many different kinds of tasks that AI could help with:

- drafting demand letters,

- doing smarter triage,

- referral of people to services that can be a good fit,

- screening frequent, repeat plaintiffs’ filings for their accuracy and legitimacy,

- providing language access,

- sending reminders and empowerment coaching

- assisting people fill in forms, and beyond.

It’s great that there are so many different ideas about how AI could be helpful, but to get more collaboration from computer scientists and technologists, we need to have a clear set of goals, prioritizing among these tasks.

Ideally, this common task list would be organized around what is feasible and impactful for service providers and community members. This task list could attract more computer scientists to help us build, fine-tune, test, and Implement generative AI that can achieve these tasks.

Our group at Legal Design Lab is hard at work compiling this possible list of high-impact, lower-risk AI and Access to Justice tasks. We will be making it into a survey, and having as many people in the justice professional community rank which tasks would be the most impactful if AI could do them.

This prioritized task list will then be useful in getting more AI technologists and academic partners, to see if and how we can build these models, what benchmarks we should use to evaluate them, and how we can start doing limited, safety-focused pilots of them in the field.

Join our community!

Our group will be continuing to work on building a strong community around AI and access to justice, research projects, models, and interdisciplinary collaborations. Please find more of our Stanford Legal Design Lab AI & Access to Justice Initiative at this link, and sign up here to stay notified about what we’re doing.